Last Friday’s demand disconnection event or blackout was the result of a relatively rare event with two large power generation units disconnecting from the power system. This caused the frequency to drop and demand disconnections to occur across the country. But how did this happen and what are the learnings from it? Kyle Martin of consultancy LCP takes a closer look at some of the key factors.

Background

Unplanned outages or trips at power stations happen relatively often with 64 having occurred in the UK this year already. However, two large generators independently tripping within 15 seconds of each other is very rare with LCP calculating that the probability of this occurring is once every 25 years.

National Grid has several mechanisms available to manage these events which normally happen without consumers being aware. When a power station trips it lowers the frequency of the electricity system. We use a 50Hz system in the UK meaning that our thermal power stations spin at 3,000 RPM which is synchronised across the system. When a unit trips, National Grid has a licence condition to keep the frequency to 1% above/below 50Hz and it does so by using several ancillary services (backup systems) to rebalance the system.

What caused the loss in power?

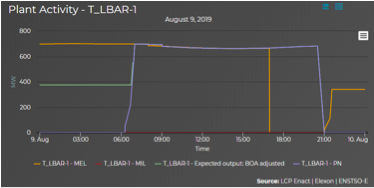

The graph below shows Little Barford power station tripping at 16:52 when its declared maximum output (in orange) dropped from 664MW to 0MW. The purple line shows what it expected to be producing at the time. This loss caused the system frequency to drop rapidly. Almost instantaneously, the Hornsea One wind farm (units 2 and 3) tripped, dropping its output from 756MW to 0MW, increasing the severity of the event.

At the time of the trips there was over 5GW of power available in the Balancing Market, so we can rule out an issue with a lack of capacity on the system or any problem with the level of capacity procured through the Capacity Market. But whether enough capacity was secured through the ancillary services market is another question.

Inertia is an important element for operating a secure system

One interesting aspect of Friday’s incident is the relatively high levels of non-synchronous generation on the system consisting of significant interconnector imports, nearly 9GW of transmission connected wind generation as well as more wind and solar connected at the distribution level. Renewables such as wind and solar as well as interconnectors are non-synchronous and therefore don’t provide inertia. This is important because inertia slows down the frequency change, giving ancillary services more time to respond and resolve the problem.

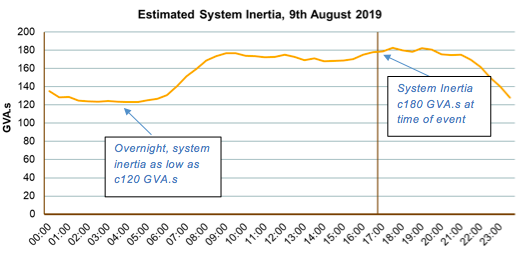

The graph below provides an estimate of the level of inertia on the system on the 9th of August. We calculate system inertia was 180 GVA.s at the time of the event, and as low as 120 GVA.s overnight. This suggests inertia was manageable at the time of the trips, well above the 130 GVA.s level National Grid has previously published as being the lowest amount of inertia the system can manage. Had the event occurred overnight, there may have been further issues, as inertia was below the minimum acceptable level.

Interestingly, Julian Leslie of National Grid has already discussed the prospect of National Grid paying for inertia services in the future which will be needed if future power stations (or other technologies such as flywheels) are going to be built to provide this service. Batteries can produce synthetic inertia, but this still take c 0.5 seconds to respond so having synchronised units providing inertia instantaneously is still a must for operating the power system safely.

What happened to the voltage?

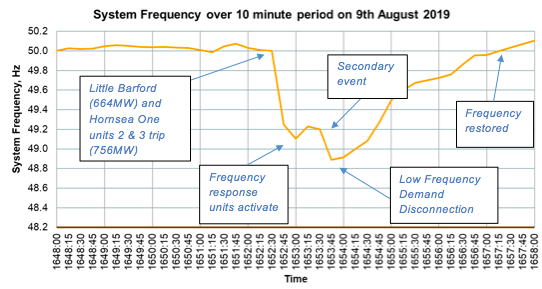

The graph below shows how quickly the frequency deviated following the loss of both Little Barford and Hornsea One (units 2 and 3).

National Grid should hold enough operating reserve to cover the “largest in-feed loss”, which is predominantly driven by large generators and interconnectors. However, with Little Barford’s 664MW trip and Hornsea’s 756MW trip followed by a secondary event there wasn’t enough time to catch the combined drop in frequency to prevent demand disconnections.

Did National Grid procure enough ancillary services?

One other factor that may have added to the problem and explain the secondary drop in frequency is small embedded generators also tripping because of the sudden initial drop-in frequency. These changes have been an ongoing issue for several years with Ofgem continuing its work to make embedded generation more resilient to changing frequency events with a modification[1] being approved at the start of August. This will make embedded generation units below 5MW more resilient to Rate of Change of Frequency (RoCoF) events in the future.

Disconnections need to be looked at

The demand disconnections that occurred caused significant disruption with transport (railways, traffic lights and airports), hospitals and hundreds of thousands of homes losing power. We need to review who and how we disconnect demand in future low frequency events. Demand Side Response (DSR) and smart networks could provide a much more managed response with no loss of critical services. Questions also need to be asked of the non-energy sector as to why, for example, railways, took several hours to restore power while National Grid had brought the electricity system back to normal operating parameters within an hour.

Summary

National Grid are already reviewing the incident with an interim report being produced for Ofgem and a full review due to be completed in September. Although there will be lessons to learn and improvements to be made to how the electricity system as well as other sectors function there are several conclusions we can make:

- This wasn’t a case of insufficient generation capacity as there was significant capacity available through the Balancing Market;

- The level of inertia was within operating limits when the trip occurred;

- Ofgem’s changes to embedded RoCoF will help with future events but should be monitored;

- The level of operational reserve may need to be increased considering this event;

- National Grid can procure more frequency response and inertia, but this would come at a cost; and

- Demand disconnections need to be reviewed.

Whatever the outcome of this review we should consider that any rushed decisions not supported by careful analysis of the data will quickly lead to increased costs for consumers and might even slow down our progress to decarbonising the generation system.

Kyle Martin is head of market insight at LCP. For more information visit www.lcp.uk.com/energy-analytics or email kyle.martin@lcp.uk.com

[1] Distribution Code DC0079 ‘Frequency Changes during Large Disturbances and their Impact on the Total System’

Related stories

National Grid: Two generators cause big frequency drop

How incoming change will impact your energy strategy

Modelling battery storage: What went wrong?

Click here to see if you qualify for a free subscription to the print magazine, or to renew.

Follow us at @EnergystMedia. For regular bulletins, sign up for the free newsletter.

IMO the grid responded well with minimal impact to domestic energy consumers; pretty much all back up within one hour which for an unplanned event must be applauded. Then we have to look hard at industrial commercial consumers and how responsive was their switchgear/backup or machinery. This will need consideration Site by Site / Kit by Kit ~Trains and Ipswich hospital stick out in this regard. There was also a major contribution by timing ~ Friday after a quiet week in the holiday season ~ chances are that many engineers who could have intervened or taken sharp corrective actions were simply `on their way home` or not in the best place to react. Looking at Trains I understand many needed manual engineer intervention to re-start. Lessons learned must consider how to manage energy information; review equipment design and maintenance; the numbers of skilled engineering staff needed to mitigate against a repeat event.

What is the data source for the graph in your image “Screen-Shot-2019-08-15-at-10.36.51.png”?